Your attack surface represents all of the different ways that an attacker can gain access to sensitive data and compromise applications that your organization is trying to protect. There are hundreds of different attack vectors that an attacker can leverage to gain access to an organization—everything from compromised credentials and social engineering to more advanced techniques like exploiting a zero-day vulnerability.

Unfortunately for the good guys, the bad guys only need to find one weakness to exploit to make their way in. And they have hundreds of attack vectors to choose from! The solution? Make your attack surface as small as possible, thereby limiting the number of places attack vectors can be put to use. This strategy works as well for your public cloud deployments as it does elsewhere in your organization.

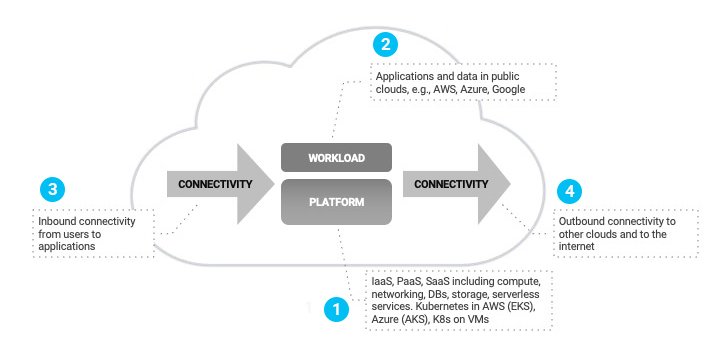

There are four key components of your public cloud attack surface that should be minimized:

- Platforms

- Workloads

- Inbound Connectivity

- Outbound Connectivity

1. Platforms

Start by understanding the security posture of the underlying platform(s) for your cloud environment. If you’re using one of the three major cloud platforms (AWS, Azure, GCP), your concern should largely revolve around your organization’s use of these platforms rather than around security of the platforms themselves. These providers have invested heavily in security, have gone through every certification available, and their platforms have been tested by tens of thousands of organizations.

What they can’t ensure, however, is that your organization has securely configured their many features and services. If you search the term “AWS S3 exposure,” you’ll find plenty of articles about enterprises that have made disastrous configuration mistakes, resulting in embarrassing and costly incidents.

To analyze your configurations, you want to start by getting a comprehensive inventory of everything across your entire cloud footprint, including IaaS, PaaS, containers, serverless, and more. Because cloud platforms are dynamic, you want continuous monitoring so that you’re always aware of changes.

From there, map your inventory to the set of policies your organization has put into place to minimize the risk of exposure resulting from the insecure configuration of a cloud platform. Obviously, you don’t want to publicly expose S3/storage buckets, but there are a number of other policies you can enact that will go a long way towards eliminating your exposure to the hundreds of attack vectors mentioned earlier in this post.

Finally, you want to put automatic remediation into place whenever possible. This will help ensure that, when changes occur, your configuration remains aligned to your policies.

2. Workloads

Even if you’ve properly configured the cloud platform, using identity-based workload segmentation is critical to further minimizing your attack surface to stop lateral threat movement. This step minimizes the potential damage that attackers can cause if they do make their way in.

Bad actors can wreak havoc on flat networks that allow unchecked lateral movement. But segmenting a network using the typical approach—network-based firewall policies—is unmanageably complex, leading to human error and exposed workloads.

Workload-identity verification ensures that you are limiting access only to known applications and services, and you want to ensure that you’re verifying the actual software in question, down to the sub-process level. For example, it’s not enough to simply allow or block Python scripts. You want to allow the specific Python scripts that are needed to make your applications operate properly and block everything else.

From there, identity-based segmentation ensures that all network paths that aren’t necessary for the proper functioning of your business applications are eliminated. The result? Only known and verified software is able to communicate, and only where it absolutely needs to communicate on approved pathways.

3. Inbound connectivity

Zero trust remote access has quickly become the preferred method for remote user connectivity, and cloud workload access is no exception. With zero trust, applications are never exposed to the internet, making them invisible to unauthorized users. Invisibility is a superpower that everyone wants, and zero trust delivers!

Whether you have a privileged user managing an application with Secure Shell (SSH), or a business partner accessing a web-based application, zero trust allows you to provide access to applications, not to your network, thereby limiting potential threats from the outside. With this technique, even authorized users aren’t provided unfettered network access. They get access only to the applications they need to do their jobs, and nothing more.

4. Outbound connectivity

There are two forms of connectivity for most cloud workloads: cloud-to-internet and cloud-to-other-clouds or private data center.

Cloud workloads require internet access for a variety of reasons, including software update services and API connectivity to other applications. Unfortunately, providing direct access to the internet increases risk. Ensuring that internet access goes through a security intermediary that can scan for malware and other forms of malicious traffic can reduce the risk of an internet-based attack.

Secure communications to other clouds and data centers is another important aspect of minimizing your cloud attack surface. As with user access, zero trust principles should be applied, ensuring that applications can securely connect to other applications, not networks. By eliminating open network connectivity, you can ensure that even if an application is compromised, the attacker’s ability to do damage across your cloud footprint is severely limited.

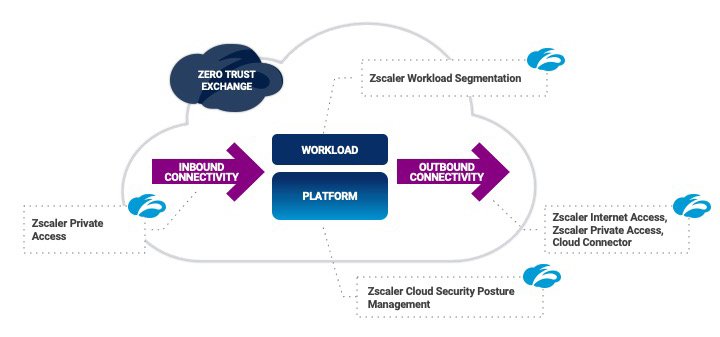

Minimize your cloud attack surface with Zscaler Cloud Protection

Zscaler Cloud Protection (ZCP) was designed from the ground up to minimize the attack surface and take the complexity out of securing your cloud footprint. The result is tighter security with lower costs and dramatically reduced complexity.

Contact us to learn more or to schedule a custom demo of Zscaler Cloud Protection.