Zscaler Blog

Erhalten Sie die neuesten Zscaler Blog-Updates in Ihrem Posteingang

Abonnieren

Zero Trust für Cloud-Workloads

Im Laufe der letzten Jahre wurde Zero Trust zu Recht immer beliebter und viele Unternehmen haben einen entsprechenden Ansatz eingeführt. Das Zero-Trust-Sicherheitsmodell reduziert das Risiko erheblich, indem es die Angriffsfläche minimiert und verhindert, dass sich Bedrohungen innerhalb eines Netzwerks lateral ausbreiten. Mit Zero Trust ersetzen Unternehmen ihr bisher vorherrschendes übermäßiges Vertrauen durch das Leitprinzip „niemals vertrauen, immer überprüfen“.

Eigentlich gilt Zero Trust für alle User, Geräte und Workloads, aber in den meisten Unternehmen ist dieser Begriff zum Synonym für den User-Zugriff auf Anwendungen geworden. Im Zuge der Umstellung auf die Cloud ist die Anwendung von Zero-Trust-Prinzipien auf Cloud-Workloads mittlerweile ebenso wichtig wie die Nutzung des Modells für den User-Zugriff.

Doch wie lässt sich dies bewerkstelligen? Grundlegend ist die Annahme, dass alle Entitäten – sowohl innerhalb als auch außerhalb des Netzwerks – nicht vertrauenswürdig sind und überprüft werden müssen, bevor der Zugriff gewährt wird. Durch die Authentifizierung und Autorisierung aller Zugriffe auf das Netzwerk wird eine Identität für alle Workloads erstellt. Anhand dieser Identität können dann Richtlinien mit minimaler Rechtevergabe erstellt werden, die den Zugriff der Workloads auf das absolut Notwendige beschränken.

Identifizierung von Usern UND Anwendungen

Ein zentrales Prinzip des Zero-Trust-Zugriffs besteht darin, dass alle User authentifiziert und autorisiert werden müssen, bevor der Zugriff gewährt wird. Die meisten Implementierungen nutzen nicht nur strikte Authentifizierungsmethoden, sondern nehmen auch verschiedene Kontextelemente wie z. B. den Endgerätestatus zu Hilfe, um über den Zugriff zu entscheiden.

Workloads sind etwas schwieriger zu authentifizieren und zu autorisieren, aber mit den richtigen Technologien lässt sich dies problemlos bewerkstelligen. Bei den meisten Implementierungen werden Workloads nicht als vertrauenswürdig eingestuft und von der Kommunikation ausgeschlossen, es sei denn, sie wurden durch eine Reihe von Attributen identifiziert, z. B. durch Fingerabdrücke oder Identitäten.

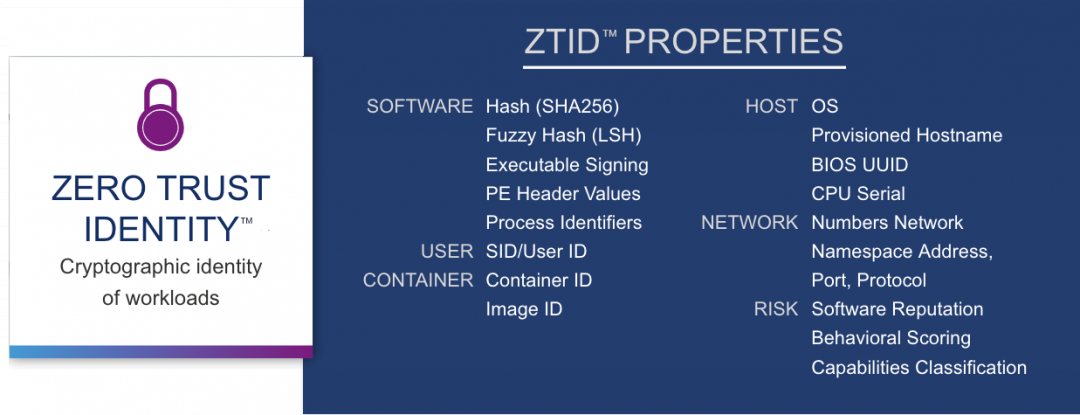

Zscaler Workload Segmentation erstellt beispielsweise eine kryptografische Identität für jede Workload. Diese Identität basiert auf zahlreichen Variablen, darunter Hashes, Prozesskennungen, Verhaltensweisen, Container- und Host-ID-Variablen, Zuverlässigkeit und Hostnamen. Sie wird bei jedem Kommunikationsversuch einer Workload überprüft und bei der Entscheidung, ob der Zugriff gewährt werden soll oder nicht, mit den Richtlinien für minimale Rechtevergabe kombiniert.

Zugriff mit minimaler Rechtevergabe für User UND Anwendungen

Vor der Einführung von Zero Trust war das Modell für User so konzipiert, dass den Usern – egal, ob sie sich im lokalen Netzwerk oder an einem Remote-Standort befanden – vertraut und ihre Identität als nicht gefährdet betrachtet wurde. Im Grunde genommen hatten User Zugang zu allen Ressourcen im Unternehmensnetzwerk, wodurch sich Cyberkriminelle mühelos lateral durch das Netzwerk bewegen konnten.

Durch die Anwendung eines Zero-Trust-Konzepts ändert sich dieses Modell völlig, da das Prinzip der minimalen Rechtevergabe eingeführt wird und User somit nicht mehr auf das Netzwerk, sondern nur auf die benötigten Anwendungen und Ressourcen zugreifen können. Wenn nun die Identität eines Users kompromittiert wird oder ein User bösartige Absichten entwickelt, ist der mögliche Schaden durch eine viel geringere Anzahl von Ressourcen und Anwendungen, auf die er zugreifen kann, stark begrenzt.

Wie zahllose Ransomware- und Malware-Angriffe sowie kompromittierte seriöse Software – wie im Fall des Hackerangriffs auf SolarWinds – gezeigt haben, kann die Anwendung ähnlicher Konzepte auf Workloads das Risiko einer Sicherheitsverletzung erheblich reduzieren und den Aktionsradius kompromittierter oder schädlicher Software einschränken.

Wenn Sie minimale Zugriffsrechte für Cloud-Workloads einführen, verabschieden Sie sich damit auch automatisch von flachen Netzwerkstrukturen, die zu unnötigen Zugriffsberechtigungen in Ihrer Cloud-Umgebung führen. Die neuen Richtlinien gewähren ausschließlich Zugriff für die User und Anwendungen, die die Workload benötigt, um ordnungsgemäß zu funktionieren.

Zero Trust für Cloud-Workloads

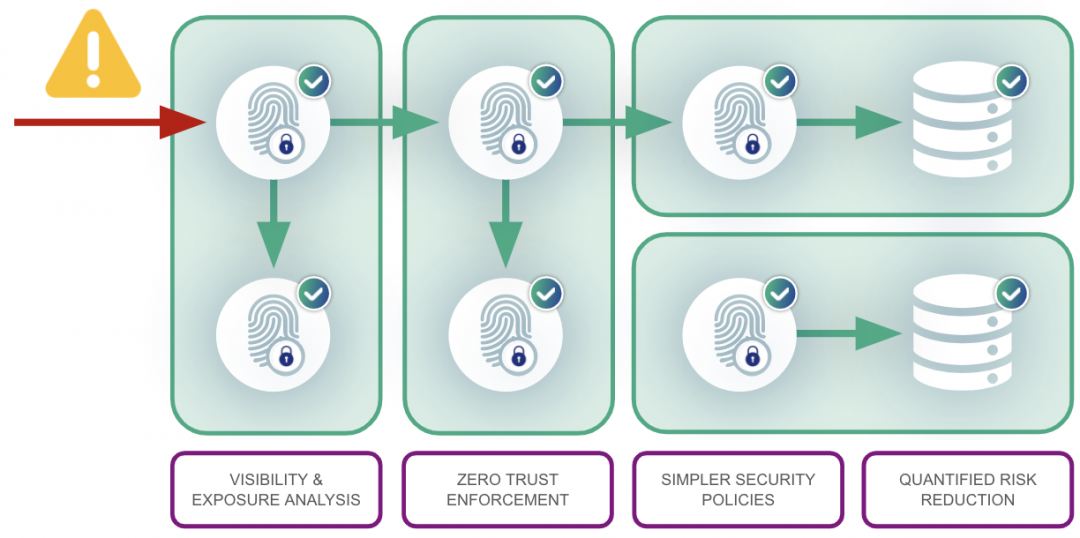

Sobald diese Schritte abgeschlossen sind, können nur noch bekannte und verifizierte Workloads im Netzwerk kommunizieren. Jetzt haben diese Workloads nur noch Zugriff auf die User, Anwendungen und Ressourcen, die für das ordnungsgemäße Funktionieren erforderlich sind.

Infolgedessen wird das Risiko drastisch reduziert und die Angriffsfläche minimiert. Schädliche Software kann sich nun nicht mehr authentifizieren und wird gänzlich vom Netzwerk abgeschottet. Sollte es einem Cyberkriminellen gelingen, eine Workload zu kompromittieren, sind seine Möglichkeiten zur lateralen Bewegung im Netzwerk stark eingeschränkt.

War dieser Beitrag nützlich?

Haftungsausschluss: Dieser Blog-Beitrag wurde von Zscaler ausschließlich zu Informationszwecken erstellt und wird ohne jegliche Garantie für Richtigkeit, Vollständigkeit oder Zuverlässigkeit zur Verfügung gestellt. Zscaler übernimmt keine Verantwortung für etwaige Fehler oder Auslassungen oder für Handlungen, die auf der Grundlage der bereitgestellten Informationen vorgenommen werden. Alle in diesem Blog-Beitrag verlinkten Websites oder Ressourcen Dritter werden nur zu Ihrer Information zur Verfügung gestellt, und Zscaler ist nicht für deren Inhalte oder Datenschutzmaßnahmen verantwortlich. Alle Inhalte können ohne vorherige Ankündigung geändert werden. Mit dem Zugriff auf diesen Blog-Beitrag erklären Sie sich mit diesen Bedingungen einverstanden und nehmen zur Kenntnis, dass es in Ihrer Verantwortung liegt, die Informationen zu überprüfen und in einer Ihren Bedürfnissen angemessenen Weise zu nutzen.

Erhalten Sie die neuesten Zscaler Blog-Updates in Ihrem Posteingang

Mit dem Absenden des Formulars stimmen Sie unserer Datenschutzrichtlinie zu.